Operai limits the role of chatgpts in mental health aid

NEWNow you can listen to News articles!

More people resort to artificial intelligence for support, even for mental health advice. It is easy to see why: tools such as chatgpt are free, fast and always available. But mental health is a delicate problem, and AI is not equipped to handle the complexities of real emotional anguish.

To address the growing concerns, Openai has introduced new security measures for Chatgpt. These updates will limit how Chatbot responds to mental health consultations. The goal is to prevent users from becoming too dependent and encouraging them to seek adequate attention. Operai also hopes to reduce the risk of harmful or deceptive responses through these changes.

Register for my free Cyberguy report

Get my best technological tips, urgent security alerts and exclusive offers delivered directly to your inbox. In addition, you will get instant access to my definitive scam survival guide, free when it joins me Cyberguy.com/newsletter

A screen capture shows the chatgpt immediate window interface. (Kurt “Cyberguy” Knutsson)

Why is Operai making this change?

In a statement issued by OpenAI, the company admitted that “there have been cases in which our model 4 fell short to recognize signs of enthusiasm or emotional dependence.” An example, Chatgpt validated a user’s belief that radio signals came through the walls due to their family. In another, he supposedly encouraged terrorism.

ChatgPT could recover your brain in silence while experts urge caution for long -term use

These rare but serious incidents caused concern. Operai is now reviewing how he trains his models to reduce the “skofancia” or excessive agreement and adulation that could reinforce harmful beliefs.

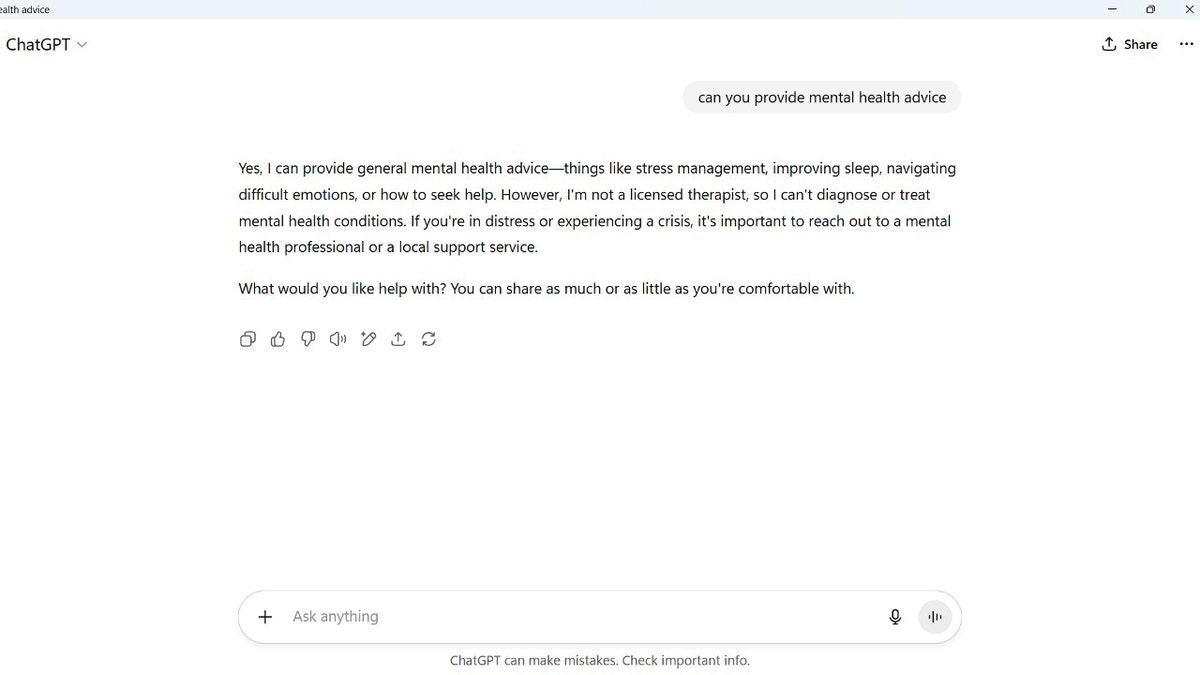

Screen capture of a notice asking if chatgpt can provide mental health advice (Kurt “Cyberguy” Knutsson)

What new safeguards have established Openai instead?

From now on, ChatgPT will ask users to take breaks for long conversations. It will also avoid offering specific advice on deeply personal problems. On the other hand, the chatbot will help users to reflect asking questions and offering pros and cons, without pretending to be a therapist.

Openai declared: “While it is weird, we continue to improve our models and we are developing tools to detect better signs of mental or emotional anguish so that Chatgpt can respond properly and point people to evidence based on evidence when necessary.”

Is your therapist? Chatgpt becomes viral on social networks because of its role as the new Z therapist of the Z Generation

The company also associated with more than 90 doctors worldwide to create an updated orientation to evaluate complex interactions. An advisory group, consisting of mental health experts, defenders of young people and researchers of human-computer interaction, is helping to shape these changes. Operai says he wants the contributions of doctors and researchers to even more refer their safeguards.

A user’s screen capture asking Chatgpt to “cheer me up with a joke.” (Kurt “Cyberguy” Knutsson)

His private conversations with Chatgpt are not legally protected

The Operai CEO, Sam Altman, recently lifted red flags about AI’s privacy. “If you are going to talk to Chatgpt about your most sensitive things and then there is a demand or whatever, we are required to produce that. And I think he is very screwed,” he said.

He added: “I think we should have the same concept of privacy for their conversations with ia what we do with a therapist or whatever.”

So, unlike talking with a licensed counselor, his chats with Chatgpt do not enjoy legal privileges or confidentiality. Be careful what you share.

Stofkers can exploit their data from only 1 chatgpt search

What this means for you

If you are resorting to ChatgPT for emotional support, understand your limits. Chatbot can help you think of problems, ask guiding questions or simulate a conversation, but cannot replace trained mental health professionals.

This is what should be taken into account:

- Do not trust Chatgpt in a crisis. If you are fighting, seek help from a licensed therapist or call a direct crisis line.

- Assume that your chats are not private. Try their conversations of AI as if they could be read by others, especially in legal matters.

- Use it to reflect, not the resolution. Chatgpt is better to help him classify his thoughts, not to solve deep emotional problems.

OpenAi changes are a step towards safer interactions, but they are not a cure. Mental health requires human connection, training and empathy: things that none can replicate completely.

Take my questionnaire: How safe is your online safety?

Do you think your devices and data are really protected? Take this fast questionnaire to see where your digital habits are. From passwords to Wi-Fi configurations, you will get a personalized breakdown of what you are doing well and what you need to improve. Take my questionnaire here: Cyberguy.com/Proof

Kurt’s Key Takeways

While chatgpt is a useful tool, it is far from being a substitute for a human being, even with the Agent introductionwhich adds capabilities, but still lacks true empathy, judgment and emotional understanding. The safeguards contribute greatly to addressing concerns about the ethical and psychological implications of AI. It is good that Operai is aware of this because it is only the beginning. To truly protect users, the company must continue to evolve how Chatgpt handles emotionally sensitive conversations.

Do you think people should use the mental health? Get us knowing in Cyberguy.com/contact

Register for my free Cyberguy report

Get my best technological tips, urgent security alerts and exclusive offers delivered directly to your inbox. In addition, you will get instant access to my definitive scam survival guide, free when it joins me Cyberguy.com/newsletter

Copyright 2025 Cyberguy.com. All rights reserved.

Kurt “Cyberguy” Knutsson is a award -winning technological journalist who has a deep love for technology, equipment and devices that improve life with their contributions for News & News Business Startzing Mornings in “News & Friends”. Do you have a technological question? Get the free Kurt’s free newsletter, share your voice, an idea of the story or comment on Cyberguy.com.